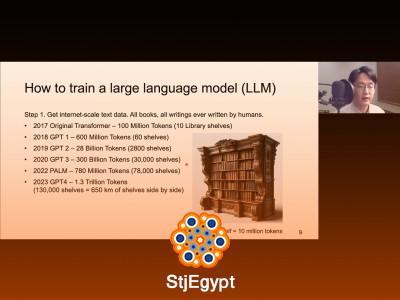

This comprehensive freeCodeCamp tutorial teaches how to build a Large Language Model (LLM) completely from scratch using Python and PyTorch. It is designed for learners who want to understand the full technical pipeline behind modern AI systems like GPT.

The course begins with data preparation and text processing, where you learn how raw text is converted into usable training data. It introduces tokenization techniques, including character-level tokenizers, and explains how text is transformed into numerical representations for machine learning models.

Next, the tutorial covers fundamental machine learning concepts such as tensors, linear algebra basics, embeddings, and matrix operations. You will also learn how training and validation datasets are created and used to evaluate model performance.

The course then moves into building a Bigram language model and gradually evolves into a full transformer-based GPT architecture. You will learn key concepts such as self-attention, positional encoding, multi-head attention, and transformer blocks.

Advanced sections cover training loops, gradient descent, optimizers, activation functions, and model evaluation. The tutorial also explains GPU acceleration using CUDA and how large-scale datasets like OpenWebText are used in training real LLMs.

By the end of this course, you will have built a working GPT-style model from scratch and gained a deep understanding of how Large Language Models are designed, trained, and deployed in real-world AI systems.