Stanford CME295 Transformers & LLMs – Autumn 2025

عدد الدروس : 9 عدد ساعات الدورة : 16:10:42 شهادة معتمدة : نعم التسجيل في الدورة للحصول على شهادةللحصول على شهادة

- 1- التسجيل

- 2- مشاهدة الكورس كاملا

- 3- متابعة نسبة اكتمال الكورس تدريجيا

- 4- بعد الانتهاء تظهر الشهادة في الملف الشخصي الخاص بك

قائمة الدروس

عن الدورة

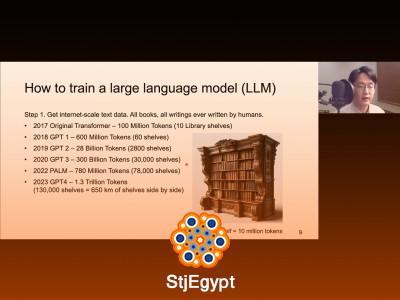

This course, Stanford CME295 Transformers & LLMs – Autumn 2025, provides an in-depth exploration of Transformer architectures and their applications in large language models. Starting from the fundamentals of Transformers, students learn how transformer-based models are structured, trained, and fine-tuned for various NLP tasks.

The series covers advanced concepts including LLM training pipelines, model tuning, reasoning capabilities, and agentic behavior in AI systems. Learners also gain insights into LLM evaluation methods to assess performance and ensure reliability in real-world applications.

Each lecture combines theoretical foundations with practical examples, enabling learners to understand both the mathematical principles and implementation strategies behind state-of-the-art language models. By the end of the course, students will have a comprehensive understanding of how to build, fine-tune, reason with, and evaluate large language models, preparing them for research, development, and deployment in AI-driven applications.