Stanford CS336 – Language Modeling from Scratch | Spring 2025

عدد الدروس : 17 عدد ساعات الدورة : 22:19:04 شهادة معتمدة : نعم التسجيل في الدورة للحصول على شهادةللحصول على شهادة

- 1- التسجيل

- 2- مشاهدة الكورس كاملا

- 3- متابعة نسبة اكتمال الكورس تدريجيا

- 4- بعد الانتهاء تظهر الشهادة في الملف الشخصي الخاص بك

قائمة الدروس

عن الدورة

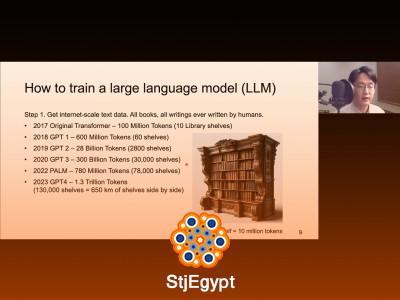

Stanford’s CS336: Language Modeling from Scratch course provides an in-depth exploration of how to build and scale language models using modern deep learning tools. The course begins with an overview of language modeling principles, tokenization, and text preprocessing, ensuring a solid foundation in NLP. Students then dive into PyTorch programming and resource accounting, understanding how to efficiently implement models and manage computational resources.

Subsequent lectures cover model architectures, hyperparameters, and advanced strategies such as mixture-of-experts models, showcasing state-of-the-art techniques for increasing model capacity. The course also examines GPU usage, kernels, and the Triton framework, providing practical guidance for high-performance computing in model training.

Parallelism strategies are discussed in depth, including model and data parallel approaches, enabling learners to train large models efficiently. The curriculum includes lectures on scaling laws, helping students understand how model performance scales with data and compute. Finally, inference methods are covered, teaching best practices for deploying trained language models.

By the end of the course, learners gain both theoretical and practical expertise in constructing, optimizing, and scaling language models from scratch, preparing them for research or applied work in NLP and AI.