Intro to Large Language Models – Andrej Karpathy

عدد الدروس : 14 عدد ساعات الدورة : 23:07:16 شهادة معتمدة : نعم التسجيل في الدورة للحصول على شهادةللحصول على شهادة

- 1- التسجيل

- 2- مشاهدة الكورس كاملا

- 3- متابعة نسبة اكتمال الكورس تدريجيا

- 4- بعد الانتهاء تظهر الشهادة في الملف الشخصي الخاص بك

قائمة الدروس

عن الدورة

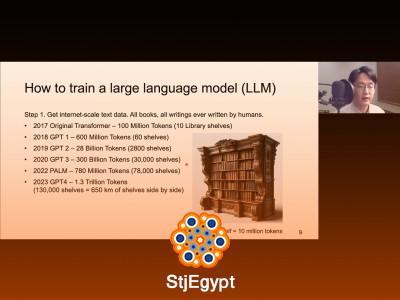

This course, Intro to Large Language Models by Andrej Karpathy, offers a comprehensive introduction to LLMs and the deep learning concepts that power them. Starting with a clear explanation of neural networks and backpropagation, the course gradually builds into practical coding exercises that guide learners through creating language models from scratch.

Students will explore language modeling principles, including building simple models like makemore, multi-layer perceptrons (MLPs), activations, gradients, batch normalization, and backpropagation techniques. The course also delves into building complex architectures like WaveNet and constructing GPT tokenizers, ultimately leading to implementing GPT models from scratch and reproducing GPT-2 (124M) models.

Throughout the lectures, Karpathy emphasizes understanding the math behind machine learning, coding practices, and model design, making complex topics approachable for beginners and intermediate learners. By the end, students gain both theoretical knowledge and hands-on experience necessary to experiment with and understand modern large language models, preparing them for further study or real-world AI applications.